Welcome to Memorandum Deep Dives, a weekly series examining the decisions reshaping the digital future 🗞️

This week, we examine the classified agreement Google signed with the Pentagon on April 28, 2026, that places its Gemini AI models on military networks used for mission planning and weapons targeting. The deal completes an eight-year reversal for the company, which once retreated from defense work under employee pressure and pledged never to build AI for weapons or surveillance.

The signing came one day after more than 600 Google employees, including over 20 directors and vice presidents from DeepMind and Cloud, sent an open letter to Sundar Pichai asking him to refuse classified military work. Pichai's answer arrived as a contract.

What looks like another defense procurement sits inside a broader shift that has played out across the AI industry over the past few months. Anthropic, OpenAI, xAI, and now Google each met the Pentagon's terms in their own way, and the paths they took say as much about the new defense market as Google's contract itself.

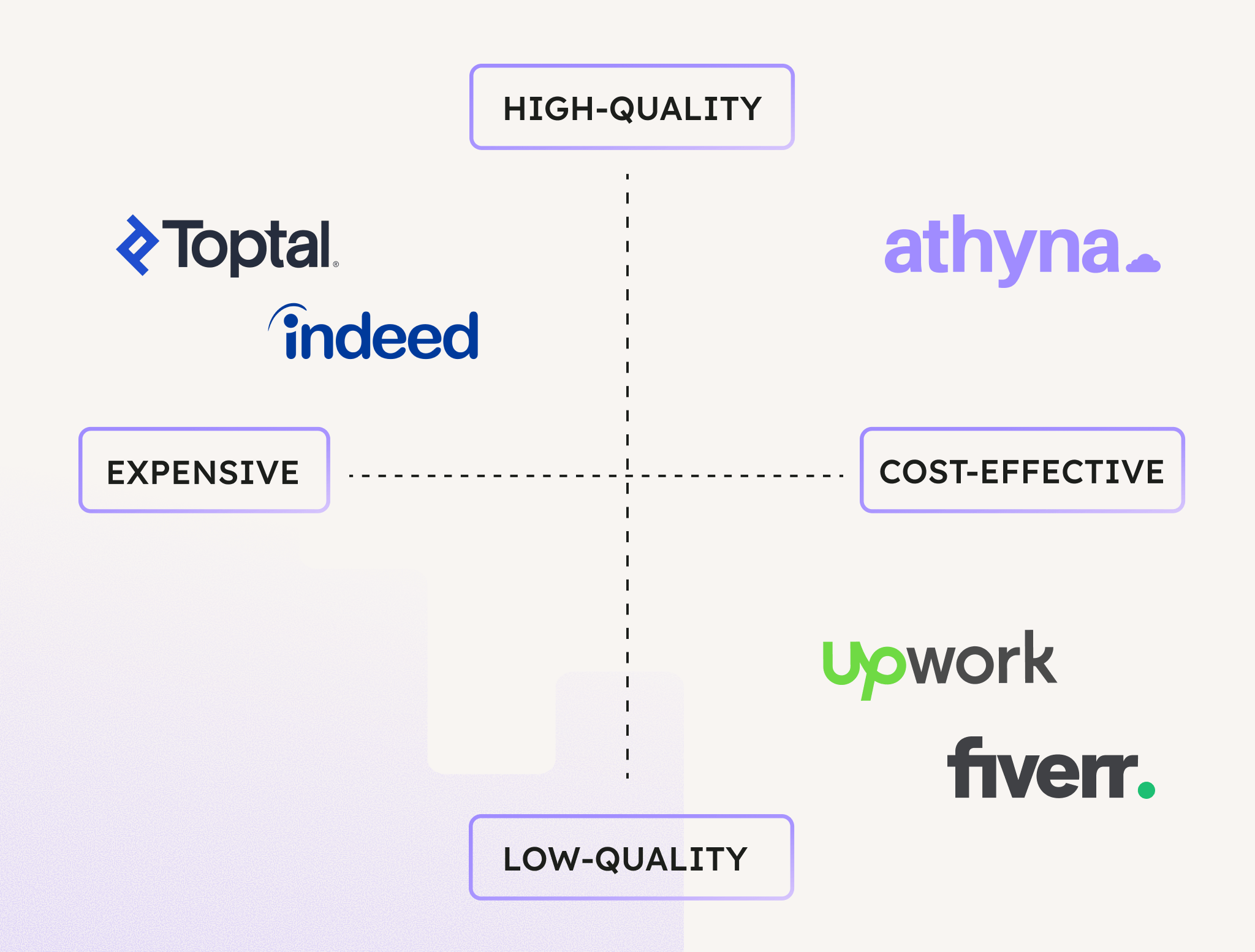

Meet your next hire in as little as five days.

How Google went from refusing Project Maven to signing a classified Pentagon contract

Over the past few months, the relationship between Big Tech and the U.S. military has come under sharper scrutiny, partly because of Anthropic reportedly resisting Pentagon demands for complete control over how and where its AI systems are deployed. That tension makes Google’s latest move all the more revealing, as the company recently signed a deal to make its Gemini models available on the Pentagon’s classified networks, completing a dramatic reversal that began eight years ago, when Google retreated from military AI under employee pressure.

In 2018, Google employees signed a petition calling on the company to withdraw from Project Maven. At the time, Google backed down, let the contract expire, and published a set of AI principles pledging it would never build technology for weapons or surveillance.

However, on April 28, 2026, Google signed a classified agreement with the U.S. Department of Defense that allows the Pentagon to use its Gemini AI models for “any lawful government purpose.”

The distance between those two moments is not just eight years. It is the distance between a company that believed it could set boundaries with the U.S. military and one that has concluded it cannot.

The timing sharpens the significance: the deal came one day after more than 600 Google employees, including over 20 directors and vice presidents from DeepMind and Cloud, sent an open letter to CEO Sundar Pichai asking him to refuse classified military work.

They argued that AI systems “make mistakes” and “centralize power,” and that classified networks are “by definition opaque,” making it impossible for Google to ensure its technology is not misused. Pichai’s answer came in the form of a signed contract.

What does ‘classified’ actually mean

Google was already deeply involved with the Pentagon before this agreement was signed. Since December 2025, its Gemini for Government has powered GenAI.mil, the Defense Department’s enterprise AI platform, serving more than 1.3M active users across all military branches for unclassified work.

But classified networks are a different category entirely since they handle mission planning and weapons targeting. Moving Gemini onto those networks means the same models that help draft administrative reports and summarize documents can now operate in environments where decisions carry lethal consequences, and where Google has no visibility into how the technology is being used.

From Maven to Gemini, the eight-year reversal

Back in 2018, roughly 4,000 Google employees signed an internal petition protesting Project Maven, a Pentagon program that used AI to analyze drone surveillance footage. This led to at least 12 employees resigning from the company, which, in turn, forced Google to decline to renew the Maven contract. Later that year, it published a set of AI principles pledging not to pursue technologies for use in weapons or surveillance.

The principles were maintained for about seven years, though the company’s behavior around them gradually shifted. In 2022, Google won a share of the Pentagon’s $9B Joint Warfighting Cloud Capability contract. In April 2024, Google fired 28 employees who staged sit-in protests against Project Nimbus, a $1.2B joint cloud contract with Amazon that provides the Israeli government and military with cloud computing and AI services.

Then came the formal policy change, and on February 4, 2025, Google revised its AI principles, removing the explicit pledges not to develop AI for weapons or surveillance.

In a blog post co-authored by Google DeepMind CEO Demis Hassabis, the company cited “a global competition taking place for AI leadership within an increasingly complex geopolitical landscape.” At the time, Al Jazeera noted that the previous principles had included a section listing four categories of applications the company would not pursue, and that these were no longer part of the updated policy.

By December 2025, Google was the first AI provider on the Pentagon’s new GenAI.mil platform, and the classified agreement signed in April 2026 was an extension of that existing relationship, not a sudden departure from it.

The terms the Pentagon set

The “any lawful government purpose” language in Google’s contract echoes the exact phrasing that became the fault line between the Pentagon and Anthropic earlier this year.

Before the fallout, Anthropic had signed a $200M contract with the Pentagon and was the first AI lab deployed on classified military networks. But when negotiations moved to broader deployment, Anthropic insisted on maintaining two guardrails: its technology could not be used for fully autonomous weapons or domestic mass surveillance. The Pentagon wanted unrestricted access across all lawful purposes, but Anthropic refused to concede.

President Trump ordered federal agencies to cease using Anthropic’s Claude models, prompting Anthropic to file two federal lawsuits.

At the time, OpenAI moved into the gap on February 27 and announced a classified Pentagon deal with three stated red lines: its models could not be used for mass surveillance of Americans, could not independently direct autonomous weapons, and could not make high-stakes autonomous decisions requiring human approval.

Critically, OpenAI said it would retain control of its safety stack and deploy cleared engineers inside classified settings. xAI signed a similar classified deal, though its terms have been less publicly documented.

And now, Google’s agreement follows the trajectory, placing it alongside the others in a growing consortium of frontier AI labs supplying models for classified military use.

The guardrail question

Google’s contract includes language stating that the AI system “is not intended for, and should not be used for, domestic mass surveillance or autonomous weapons (including target selection) without appropriate human oversight and control.” At the same time, the agreement specifies that Google cannot “control or veto lawful Government operational decision-making,” raising the question of whether contractual language can serve as a meaningful constraint once the government controls the network.

There is also a deeper structural issue in Google’s contract. The Information reported that Google’s agreement requires the company to help modify its AI safety settings and filters at the government’s request, a detail also highlighted by 9to5Google. That is materially different from retaining independent control over those safeguards, which is the model OpenAI has said it follows in its own government partnerships. In an air-gapped, classified network where Google cannot monitor the prompts or queries being run, language stating that the system “should not be used for” certain purposes begins to function less as an enforceable restriction and more as guidance.

Meanwhile, the Pentagon’s position is that existing law already restricts mass surveillance and autonomous weapons, and that private companies should not be layering additional restrictions on top of the legal framework.

Pentagon CTO Emil Michael argued that the department “can’t have a company that has a different policy preference that is baked into the model pollute the supply chain.”

Outperform the competition.

Tools & resources, ranging from playbooks, databases, courses, and more.

Deep dives on famous visionary leaders.

Interviews with entrepreneurs and playbook breakdowns.

*This is sponsored content

What the new defense market looks like

With the latest deal between Google and the Pentagon, Defense AI is no longer a side project for Big Tech companies.

In this landscape, Google’s Pentagon agreement captures a broader shift in the politics of artificial intelligence.

The old model, in which technology companies could market themselves as neutral platforms while selectively distancing themselves from military power, is giving way to something more explicit and transactional. Frontier AI systems are now strategic assets, governments want assured access to them, and the companies building those systems increasingly see defense contracts as too valuable to refuse. What once triggered internal revolt at Google is now absorbed as a manageable cost of doing business.

Employee protests, reputational criticism, and earlier ethical pledges appear secondary to revenue, influence, and proximity to state power. That does not mean corporate values have disappeared; they appear to have been repriced in light of geopolitical opportunity. Google’s reversal from Project Maven to Gemini on classified networks shows how quickly principles can bend when markets and governments move together. In that sense, the deal is not an exception but an early blueprint for the next phase of Big Tech.

P.S. Want to collaborate?

Share today’s news with someone who would dig it. It really helps us to grow.

Let’s partner up. Looking for some ad inventory? Cool, we’ve got some.

Deeper integrations. If it’s longer-form storytelling you are after, reply to this email, and we can get the ball rolling.

What did you think of today's memo? |

|

.svg)

.svg)